Category: eresearch

-

Would you like to chat? The Ethics of AI in Higher Education

I recently led a session at the eResearch Australasia conference on the ethics of AI in higher education. It is a big topic to handle, and I’m pretty new to this stuff, but the conversation went pretty well, and the awareness of both AI and ethics is high in this community. The ethical challenges posed…

-

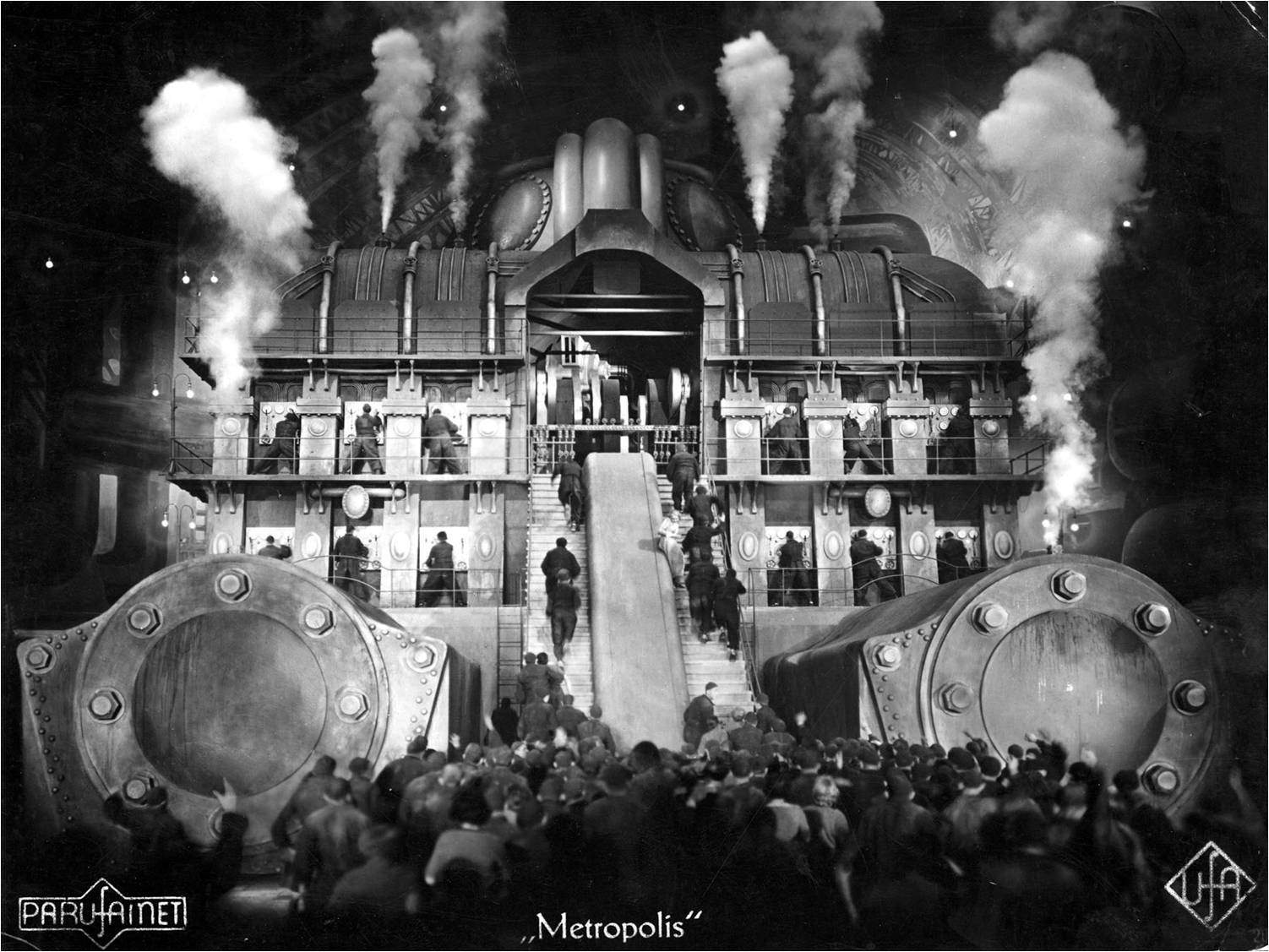

Opportunity and accountability in the eResearch push, Digital Humanities, 2012, Hamburg, Germany

I would like to open with an image; it is an image from Fritz Lang’s famous 1927 German Expressionist Science Fiction movie, Metropolis. Made in Germany during the Weimar period, Metropolis depicts a futuristic dystopian society where wealthy intellectuals rule from the city above ground, oppressing the workers who live in the depths below them.…

-

Let the sun shine in…

-

Another vision?

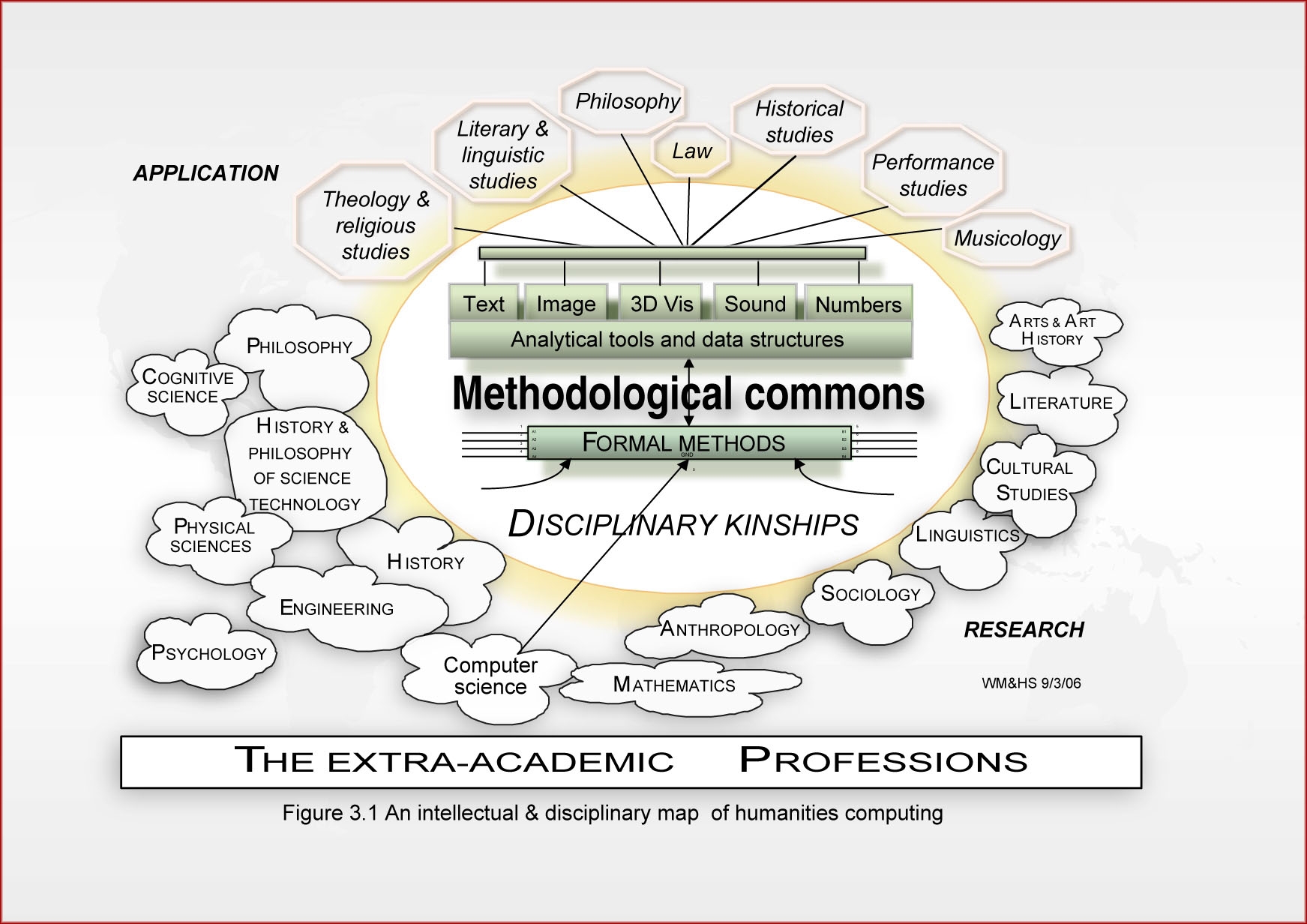

Suppose there is to be another so-called broader vision of ‘computers in the humanities’ at this stage of development. In that case, there needs to be much more work done in terms of ‘research into research’ (i.e. especially into humanities research practices). The practical and urgent problems of science require many talented people to address…

-

eResearch and Digital Humanities: a broader vision?

I have been having many conversations with people of late around the boundaries eResearch and Digital Humanities. I have received many divergent and exciting responses from researchers and professionals working in various ways with computing in the humanities. There does tend to be little agreement about certain aspects of the landscape; many researchers have discovered…

-

Data versus method (data needs heads!)

I have been thinking a little more about the relationship between eResearch and Digital Humanities of late, partly because it is the subject of my talk at the Digital Humanities conference in Hamburg in July, and I want to do justice to what I see as a critical topic that hasn’t been mainly well handled…